5 Steps to Human Movement Analysis with Movesense Sensors and Machine Learning

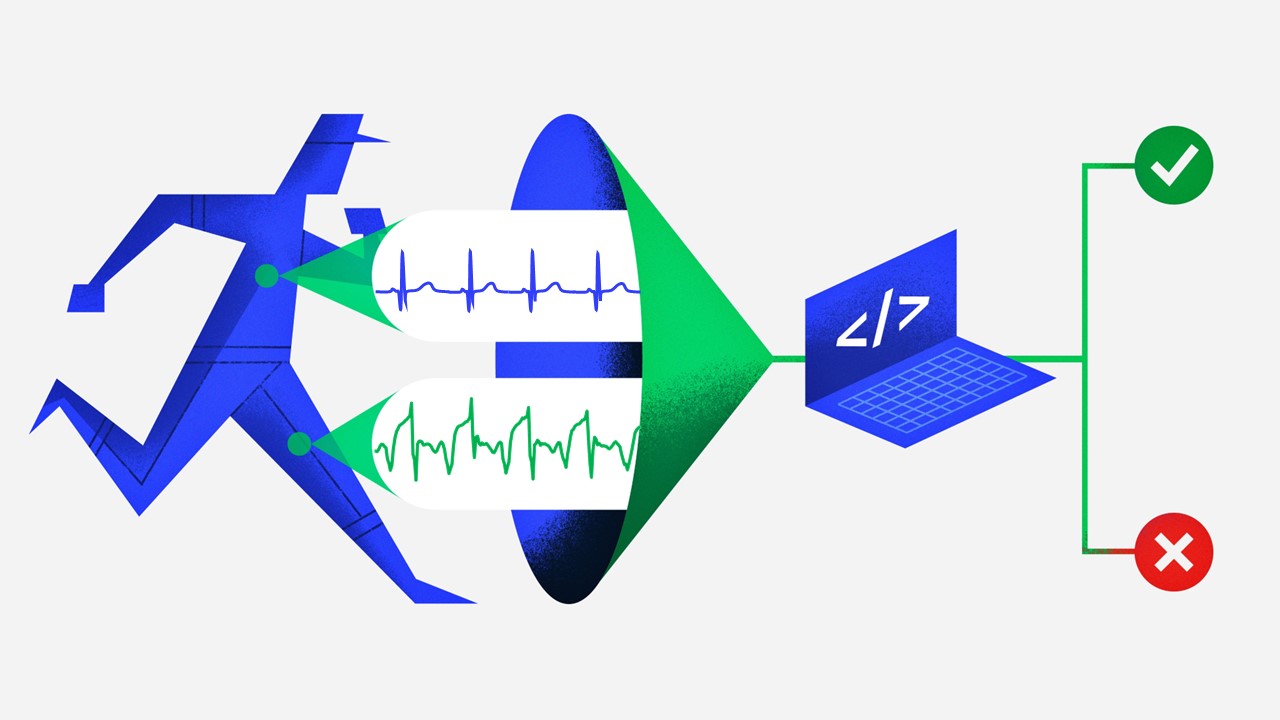

Human movement analysis is a rapidly evolving field of research and development with a wide range of practical applications. It is especially accelerated by capable wearable sensors and efficient and easy-to-use machine learning tools.

New wearable sensors like Movesense are lightweight and unobtrusive to wear in daily life as well as during any kind of sports. They can measure human movement with high accuracy while simultaneously monitoring physiological parameters such as heart rate and ECG. This makes them ideal for analyzing human activity. Sensors can also monitor the movement of the associated equipment to provide an additional perspective on the activity in question.

To turn these measurements into actionable insights, whether in health or sports, there is a general model based on machine learning and AI that developers follow with success. In this article, we explain the five main steps of the process, using Movesense sensors as an example.

1. Build your measurement model

Human movement analysis starts by describing the phenomenon and the parameters are you interested in. What are the events you want to detect or the activities you want to classify? What makes each class different enough from the others to be identifiable? This will determine the sensor configuration needed to collect the right type of data. The configuration is a tree-step process:

- Decide the number of sensors that you need to capture every movement class in a representative way.

- Select the sensor attaching locations so that the data they produce best describes the movement being measured.

- Define the sensor settings, such as which parameters to measure and what is their sampling rate.

Movesense sensors have a 9-axis inertial measurement unit (IMU), i.e., accelerometer, gyroscope and magnetometer, all with three axes. In the data collecting phase, you can connect several Movesense sensors to one receiving device. In addition to movement, the sensors can monitor heart rate, heart rate variability and 1-lead ECG.

For instance, this research project used three sensors, placed to chest and both upper arms, to classify athlete movements in beach volleyball. Another example from figure skating used four sensors to classify jumps and detect their key technical parameters. Movesense accessories such as wrist band and chest strap are designed for practical attachment of the sensors.

Movesense IMU sampling rates start from 13Hz and go up to 1,6kHz. For human movement analysis, the most commonly used sampling rate with Movesense is 52Hz, which is fast enough to cover almost all muscle-induced movement. In many cases, 26Hz or even 13Hz sampling is sufficient, allowing the good battery life of the sensor to be extended even further.

Which sensors are used depends on the type of movement to be measured. Activity classification is often doable with acceleration data alone, but detecting certain events and modeling trajectories usually requires also gyro data. The magnetometer is useful for removing gyroscope drift and helps detect events and monitor directions.

This article published in 2025 studies in detail the impact of IMU sampling rate, number of sensors, sensor placement and sensor modalities on the accuracy of human movement classification with Movesense sensors. It provides highly useful insights to developers using Movesense sensors for movement classification.

For the ECG data, Movesense sensors offer sampling rates from 125Hz to 512Hz. Only one sensor is needed for measuring ECG and heart rate intervals, attached with a chest strap or a glue-on skin electrode. Due to the analog lowpass filter of 34Hz in Movesense HR2 and Movesense HR+ and 40Hz in Movesense MD and Movesense Flash, already 125Hz includes all ECG data that is available in the signal.

The same sensor can be used also for movement measurement. Adding movement data to ECG measurements can provide interesting context information for analyzing the ECG findings. For sports use we recommend Movesense HR2 or HR+ and for health-related measurements Movesense MD.

2. Collect raw data

Once you have the optimal setup, collect raw data from the activity with your target group. Ensure that you have enough data of the activity that you are modeling. You should cover all aspects of the activity, as well as the individual differences between users. Differences may arise from the body size of the test persons, environmental factors, performance technique, etc.

A practical tool for data collecting is Kaasa Data Collector, an app developed by our software partner Kaasa solution GmbH for collecting, annotating, and managing Movesense sensor data files. Another option is the Movesense showcase app for iOS or for Android that works nicely for projects with smaller amounts of data. With a little bit of coding, it’s possible to use also a PC for collecting Movesense sensor data.

3. Annotate data

At this stage, the movement data from the sensors is in raw form. For instance, for acceleration, you’ll have values in m/s2 at your chosen sampling rate for all three axes. To give this data meaning, you need to label it. This is a critical step in any human movement analysis.

Data annotation, i.e., labeling, is an essential part of the machine learning process and often requires a lot of work. Annotation basically teaches an AI model, and the accuracy of the annotation largely determines how accurate your model will be. That’s why it’s worth putting enough effort into annotation.

Most AI models are designed to recognize and classify so many things that labeling them all in real time during data recording is not possible. In such projects it’s necessary to label the data afterwards. For this purpose, a video stream that is synchronized with the data is usually a must. In addition, you’ll need a practical tool that facilitates fast and accurate labeling.

There are several tools for data labeling available. However, if you already use Kaasa Data Collector, that’s the one optimized for Movesense data. The app has several practical tools for annotating data during and after recording.

For instance, the app offers configurable hot buttons that you can use to mark selected key events during recording. You can also record synchronous video that supports frame by frame data labeling. With voice-over you can add comments during recording that help other persons to participate the labeling.

4. Train, test, and tune the machine learning model

When you have done the annotation, the annotation tool generates a .csv file that includes the data labels and their time stamps. Upload the annotation file to an AI tool with the corresponding sensor data file and let the tool digest it. The AI tool searches features from the data that characterize the labeled events and builds the first version of the model.

After the first iteration, you will have to test and improve the model. You can run it with new data sets, challenge the algorithm with partly missing data, and use other techniques to optimize the detection accuracy.

The most common machine learning tool used in Movesense related projects is TensorFlow. It includes different versions for different hardware environments. However, for teaching your AI model, we recommend the full-blown cloud version.

All major could platforms offer their own machine learning solutions. In addition, there are many other options on the market. If you are using any of them with Movesense sensor data, we would be very interested in hearing about your experiences and sharing them with other Movesense users. Feel free to contact us via info@movesense.com on this or any other topic related to your Movesense project.

5. Run your human movement analysis model with actual measurement data

Once you are happy with the accuracy, you are ready to take your AI model in real use. You can use it online or integrate it in a mobile application. Just collect data with the sensors and let your model analyze it.

In some cases, it makes sense to run the detection algorithm locally on the sensor. This is viable if the events that you are interested in can be identified from the data of one Movesense sensor, and the classification algorithm fits to the available code space. For multi-sensor detection, the place to run the algorithm is either on a mobile app or in the cloud, whichever works better for consolidating the data of all sensors in your setup.

If you want to discuss about your own project with Movesense team experts, don’t hesitate to contact us and book a meeting. You are also welcome to contact Kaasa solution GmbH directly.

We wish you exciting experimentation with your machine learning projects!

Further reading:

Editorial: Machine Learning Approaches to Human Movement Analysis, Frontiers in Bioengineering and Biotechnology, Jan 2021